30-Minute Behavioral QA Before Deploy: 12 Bugs That Actually Break Vibe-Coded Apps

Session, Authorization, Duplicate Requests, LLM Resilience — What Static Analysis Can't Catch

30-Minute Behavioral QA Before Deploy: 12 Bugs That Actually Break Vibe-Coded Apps

Session, Authorization, Duplicate Requests, LLM Resilience — What Static Analysis Can't Catch

TL;DR: Static analysis catches "code smells." Behavioral QA catches "actual breakage."

Prerequisites

This is NOT about hacking. This is a behavioral QA routine to reduce risk before deploying your own app in staging.

Related Posts

AI Engineering

LLM Inference Optimization Part 4 — Production Serving

Production deployment with vLLM and TGI. Continuous Batching, Speculative Decoding, memory budget design, and throughput benchmarks.

AI Engineering

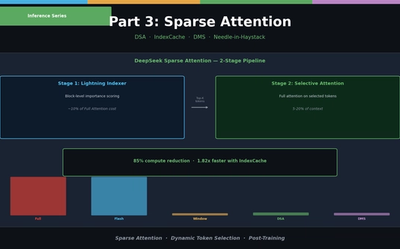

LLM Inference Optimization Part 3 — Sparse Attention in Practice

Sliding Window, Sink Attention, DeepSeek DSA, IndexCache, and Nvidia DMS. From dynamic token selection to Needle-in-a-Haystack evaluation.

AI Engineering

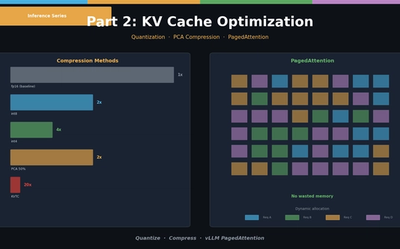

LLM Inference Optimization Part 2 — KV Cache Optimization

KV Cache quantization (int8/int4), PCA compression (KVTC), and PagedAttention (vLLM). Hands-on memory reduction code and scenario-based configuration guide.