LangGraph in Practice — Reflection Agent and Planning Patterns

Upgrade ReAct with Tool Calling, then build Reflection and Planning Agents with LangGraph.

LangGraph in Practice — Reflection Agent and Planning Patterns

The ReAct Agent we built in Part 1 has one critical weakness: it doesn't know when it's wrong. Even if it answers "Seoul's population is 50 million," it remains fully confident. The Reflection pattern gives agents the ability to self-verify. And the Planning pattern gives them the ability to systematically decompose complex tasks.

Series: Part 1: ReAct Pattern | Part 2 (this post) | Part 3: MCP + Multi-Agent | Part 4: Production Deployment

Self-Critique: How Agents Verify Their Own Output

People revise their writing after a first draft. A first draft is rarely perfect. The same goes for LLM Agents. Expecting a perfect answer in one shot is unrealistic — building a loop where the agent verifies and improves its own output leads to markedly better quality.

Related Posts

LLM Inference Optimization Part 4 — Production Serving

Production deployment with vLLM and TGI. Continuous Batching, Speculative Decoding, memory budget design, and throughput benchmarks.

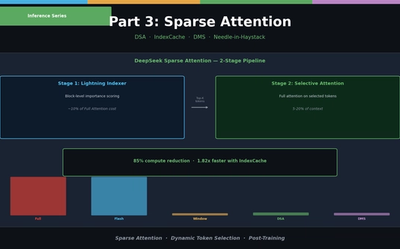

LLM Inference Optimization Part 3 — Sparse Attention in Practice

Sliding Window, Sink Attention, DeepSeek DSA, IndexCache, and Nvidia DMS. From dynamic token selection to Needle-in-a-Haystack evaluation.

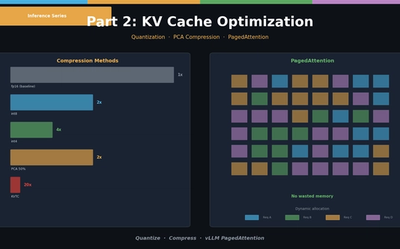

LLM Inference Optimization Part 2 — KV Cache Optimization

KV Cache quantization (int8/int4), PCA compression (KVTC), and PagedAttention (vLLM). Hands-on memory reduction code and scenario-based configuration guide.